Top Stories

How to save GPU memory in LLM serving: Principles and operating conditions of KV cache offloading

By Kyujin Cho, Jinho HeoLearn how KV cache offloading works in LLM serving for Agentic AI—covering architecture, data movement paths, and when offloading helps or hurts inference performance.27 April 2026

Building Production RAG Systems: Lessons from Tariff Support

By Sergey LeksikovOver the past year, we have built two production RAG systems addressing completely different tasks. One is HSense, a multi-agent system for Korean customs item classification, and the other is the Backend.AI RAG Assistant, which processes customer support queries based on seven document projects.23 April 2026

Inside NVIDIA DGX Spark: Is DGX Spark Actually Blackwell?

By Jeongkyu Shin, Kyujin ChoDGX Spark is a desktop AI supercomputer that packs 128GB of unified memory and 1 PFLOP-class Grace Blackwell (GB10) performance into a palm-sized box. However, its internal GPU belongs to the SM12x series, distinct from the data center-grade Blackwell (SM100). This creates a subtle architectural gap: the latest LLM stacks, heavily reliant on MLA·DSA-specific kernels like GLM-5, "Blackwell support" alone doesn't guarantee immediate compatibility. This creates a subtle architectural gap requiring separate code management for Hopper, data center Blackwell, and consumer Blackwell. The engineering team examines Spark, which is based on Blackwell but features a slightly different architecture.19 February 2026

How to save GPU memory in LLM serving: Principles and operating conditions of KV cache offloading

19 February 2026

19 February 2026Inside NVIDIA DGX Spark: Is DGX Spark Actually Blackwell?

By Jeongkyu Shin, Kyujin Cho

News

Lablup Joins the Python Software Foundation as a Participating Sponsor

By LablupLablup is now a Participating Sponsor of the Python Software Foundation (PSF).13 February 2026

Behind the Success: Lablup x Upstage Pass Phase 1 Evaluation for Sovereign AI Foundation Model Project

By LablupIn January 2026, the Upstage consortium that Lablup is part of successfully passed the Phase 1 evaluation for the Korean government's Sovereign AI Foundation Model project. This initiative aims to protect national AI sovereignty by having the government provide support for GPUs, data, and talent development, while the private sector actively leverages these resources to develop frontier-grade AI foundation models. We sat down with team members from Upstage and Lablup to hear the behind-the-scenes story of our Phase 1 journey.6 February 2026

Meet Lablup at CES 26

By Lablup6 January 2026

Releases

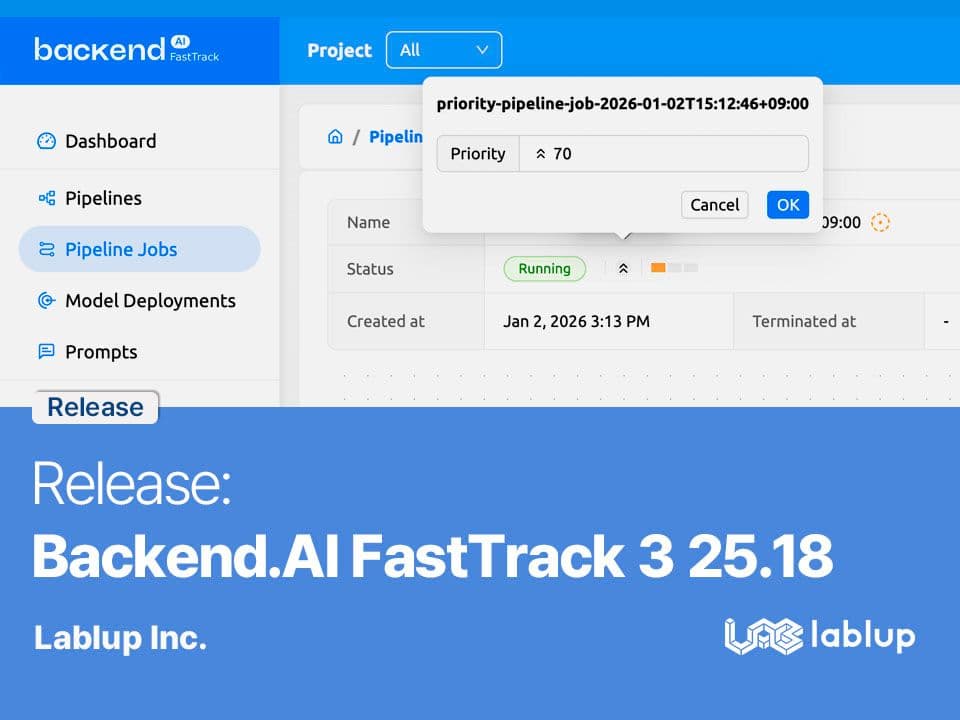

Release: Backend.AI FastTrack 3 25.18

By LablupThis article covers the major changes in Backend.AI FastTrack 3 25.18.5 January 2026

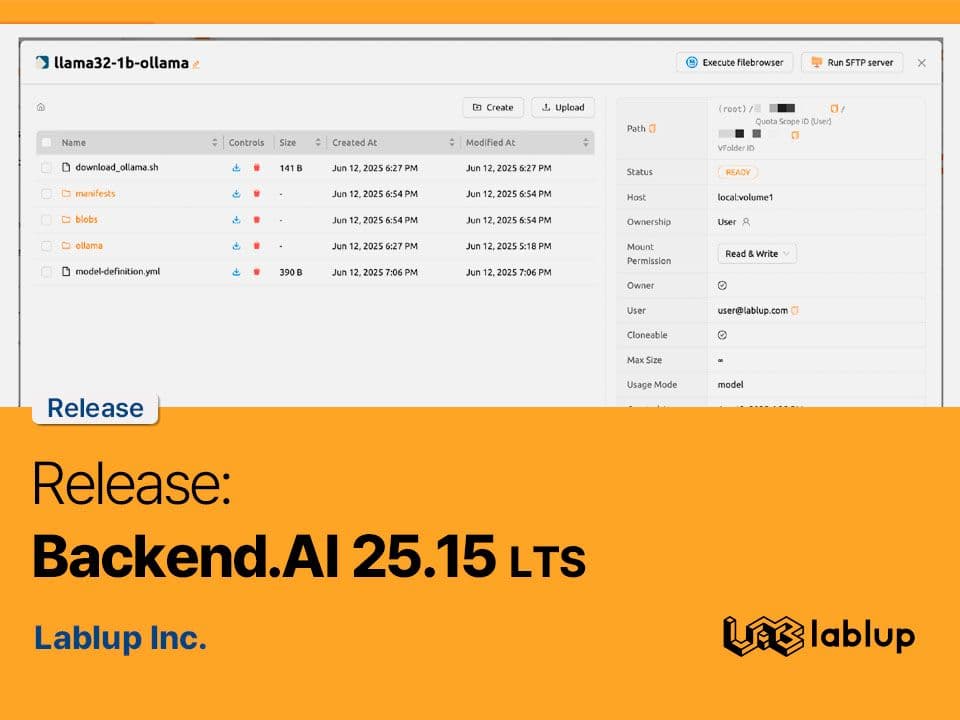

Release: Backend.AI 25.15 (LTS)

By LablupBackend.AI 25.15 LTS is now officially available. This release brings comprehensive system-level optimization and user experience improvements, reinforcing the platform’s reliability and scalability for large-scale AI model training, deployment, and research.2 October 2025

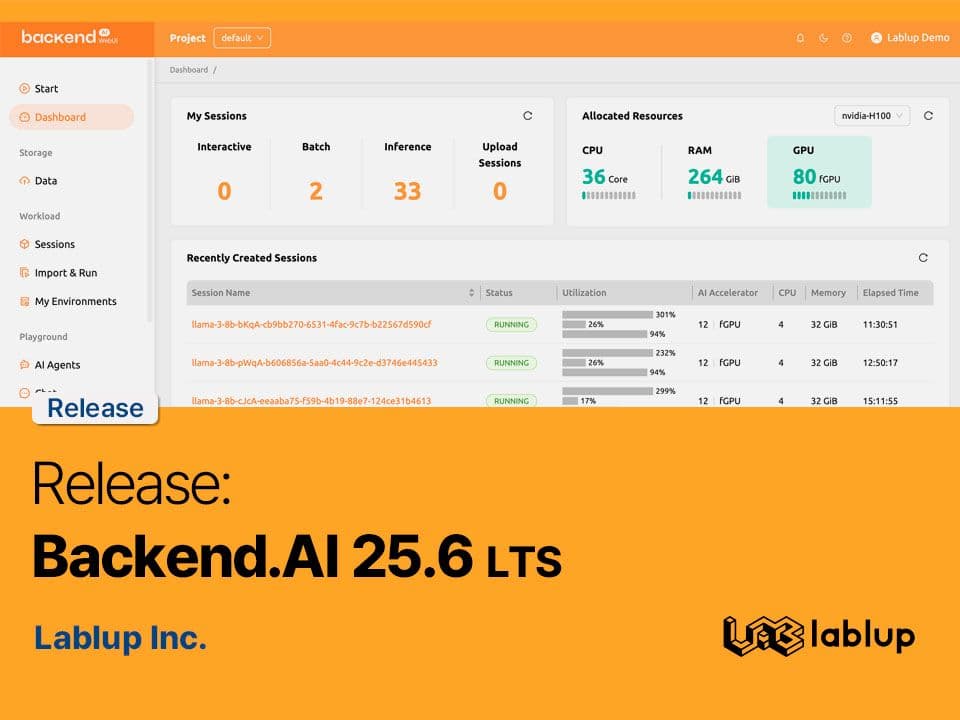

Release: Backend.AI 25.6 (LTS)

By LablupWe're excited to announce Backend.AI 25.6, the first Long Term Support (LTS) release of 2025. This update brings significant improvements to system monitoring, audit logging, and model service auto-scaling, making operations more convenient than ever.17 April 2025

Engineering

How to save GPU memory in LLM serving: Principles and operating conditions of KV cache offloading

By Kyujin Cho, Jinho HeoLearn how KV cache offloading works in LLM serving for Agentic AI—covering architecture, data movement paths, and when offloading helps or hurts inference performance.27 April 2026

Building Production RAG Systems: Lessons from Tariff Support

By Sergey LeksikovOver the past year, we have built two production RAG systems addressing completely different tasks. One is HSense, a multi-agent system for Korean customs item classification, and the other is the Backend.AI RAG Assistant, which processes customer support queries based on seven document projects.23 April 2026

Writing Stories for 50 Components: Foundation, Automation, and AI

By Seunghyun LimTo write Storybook stories for 50+ BAI components in the Backend.AI WebUI, I started by setting up the infrastructure— i18n, theming, and branding — then upgraded to Storybook v10 and merged two instances into one. An automation pipeline combining a 1,000-line guideline, Claude-based story generation, and GitHub Actions CI checks kept quality consistent from PR creation through deployment. The key takeaway: build the foundation and automation first, and when working with AI, the human role shifts from writing code to defining and refining standards.5 March 2026